Welcome to the first series of MiTornAve, . It is a pleasure to have you all here!

Ten years have already passed since the historic match between AlphaGo and Lee Sedol in 2016. We are now truly living in the "AI Era." Have you ever wondered how these AIs, which have moved beyond simple tools to permeate every corner of our daily lives, actually think and operate?

I designed this series to provide a comprehensive guide—from fundamentals to advanced concepts—focusing on Neural Networks, which was my major and research subject. The current pace of AI development is staggering. While I may not have a definitive answer to "What exactly are these entities, and where should they be used?", I have compiled my research and experiences to find out.

We have prepared everything from basic theories to Colab code for hands-on practice. Let's step into the heart of AI together! We begin our journey with the story of AI's first cell: the "Perceptron."

1. Neurons, the Starting Point of Thought: The Semiconductors in Our Heads

How do humans "think" and "recognize" objects? To answer this, scientists began dissecting the human brain in the late 19th century. They discovered that our brains contain about 86 billion special nerve cells called "Neurons," forming a massive network.

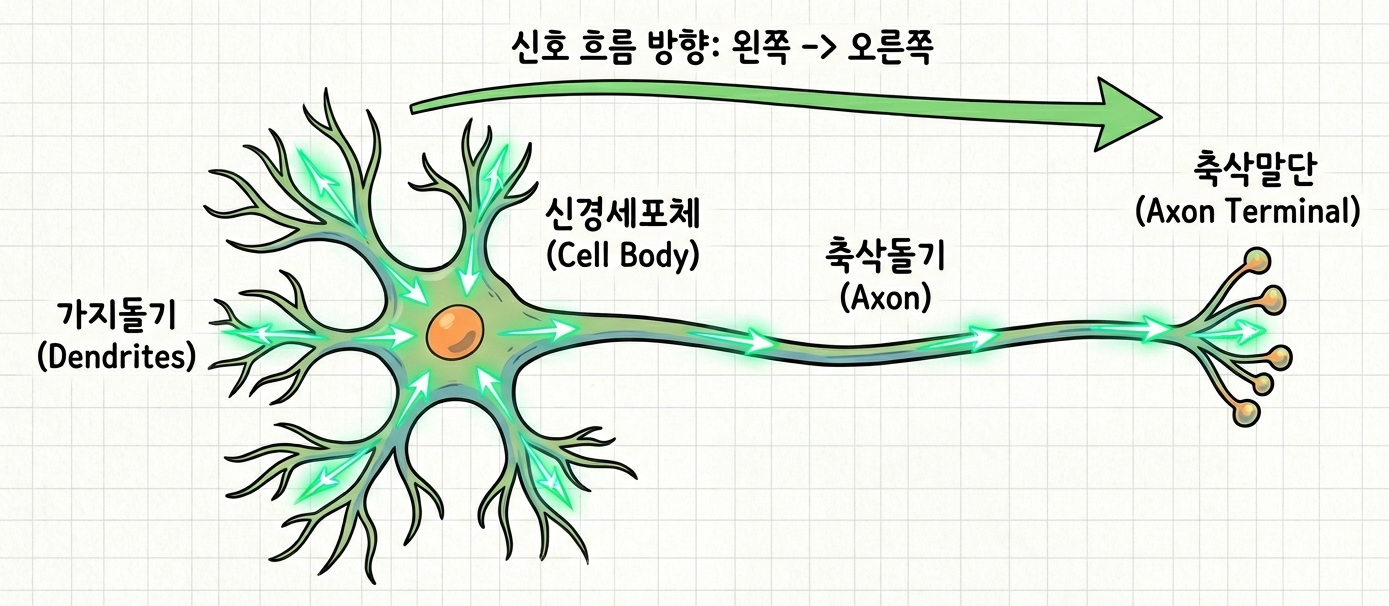

The operating principle of a neuron is surprisingly simple yet clear. This simple structure stacks up to create various electrical signals, which then translate into "thought." Let's look at the diagram.

Dendrites: Act as antennas that receive numerous "electrical signals" transmitted from other external neurons.

Cell Body: Collects the various signals coming through the antennas. Crucially, it doesn't let every signal pass. It only transmits to the next stage if the sum of the signals exceeds a certain "Threshold."

Axon: A highway that fires the strong signal that crossed the threshold to the next neuron.

Synapse: The narrow gap between neurons where signal strength is adjusted. Frequently used paths widen, while unused ones narrow. This is the reality of "learning."

The most important part is the Cell Body. It does not pass all signals; it only triggers the next neuron based on a specific threshold condition.

But why are we talking about brain cells all of a sudden?

2. The Arrival of the Perceptron: A Psychologist’s Challenge, "Can Machines Learn?"

"Can the human thought process be translated into a mathematical formula?"

Everything started with this bold question. In 1957, Frank Rosenblatt, a psychologist at the Cornell Aeronautical Laboratory, introduced a mathematical model that mimicked the structure of a neuron: the "Perceptron."

Interestingly, the creator was a psychologist, not an engineer. While the mainstream academia at the time was focused on "Symbolism"—manually inputting every rule into a machine—Rosenblatt believed that machines, like the human brain, should learn by adjusting connection strengths themselves.

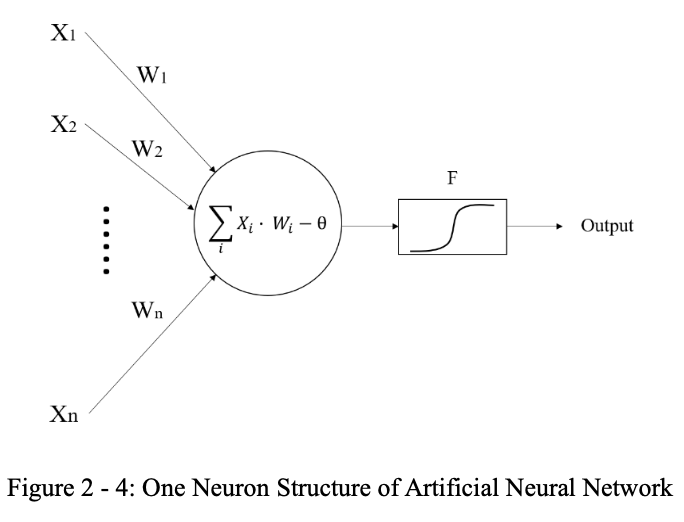

하나의 퍼셉트론. 놀랍게도 뉴런의 구조를 빼다 박았다.

Rosenblatt translated the parts of a neuron into mathematics as follows:

Input (x): Electrical signals entering the dendrites.

Weight (w): Signal strength adjusted at the synapse (determining which information is more important!).

Weighted Sum: The value obtained by multiplying inputs by their weights and adding them all up.

Activation Function (Threshold f): If the sum exceeds the threshold, it outputs 1 (transmit); otherwise, it outputs 0 (block).

"The Birth of the Self-Learning Machine"

The debut of the Perceptron was an even bigger sensation than today’s ChatGPT craze. In 1958, The New York Times featured the invention, stating it was the "embryo of an electronic computer that [the Navy] expects will be able to walk, talk, see, write, reproduce itself and be conscious of its existence."

Though it was a simple structure that essentially just drew a line, the Perceptron was the first in human history to define the abstract concept of "Learning" as a "mathematical mechanism." The way it performed "Self-Correction" by adjusting weights (w) when data was wrong was like planting the seed of intelligence in a machine.

3. Decision Boundary: Setting the Standard with a Single Line

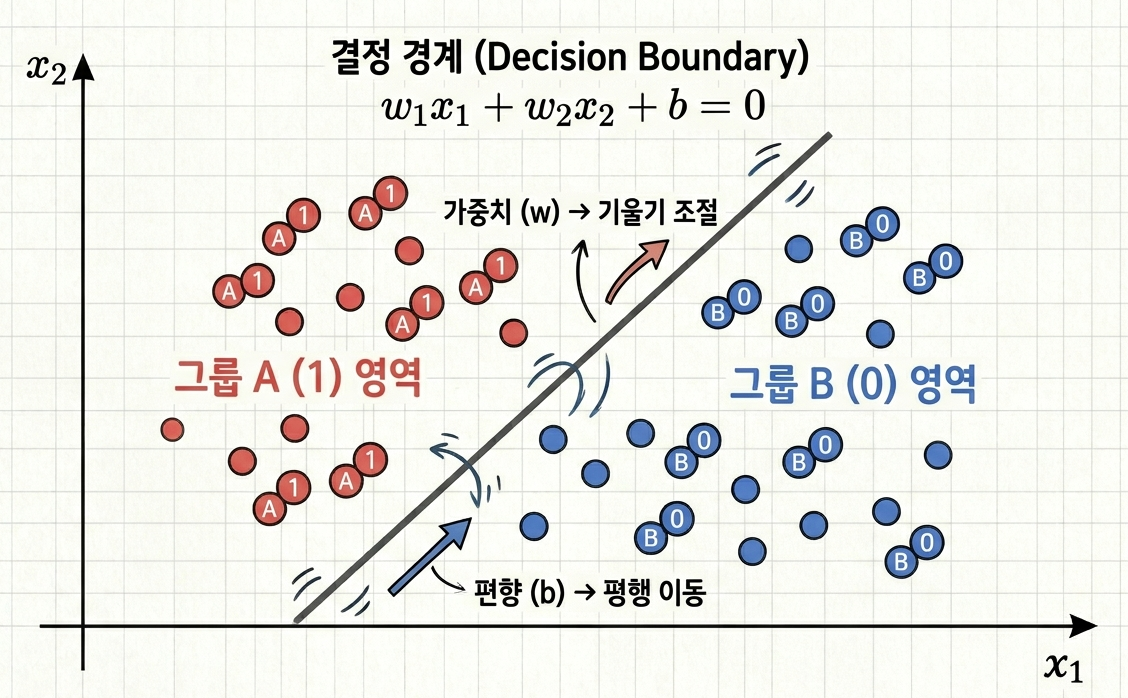

The most intuitive way to understand how a Perceptron classifies data is through "Geometry." Imagine a 2D plane.

Suppose red dots (Group A) and blue dots (Group B) are scattered across a piece of paper. The Perceptron’s sole mission is to draw a single straight line that perfectly separates these two groups. This line is the Decision Boundary—the standard by which AI judges the world.

The formula for a Perceptron is:

y = f(w_1x_1 + w_2x_2 + b)

If we remove the activation function fand set the inner expression to zero, we get the "equation of a line" we learned in middle school:

w_1x_1 + w_2x_2 + b = 0

The Perceptron moves and rotates this line as it views the training data. Weights (w) change the slope of the line, and the Bias (b) shifts the line (parallel translation). Once the optimal position is found, the Perceptron declares:

"Everything above this line is Red (1)."

"Everything below this line is Blue (0)."

This is the principle of "Classification," the most fundamental operation of today’s massive AI systems. The Perceptron was a possessor of "dichotomous thinking," organizing a complex world with a single straight line.

4. The Intersection of Hope and Despair: Conquering Logic Gates and the Fatal "XOR" Trap

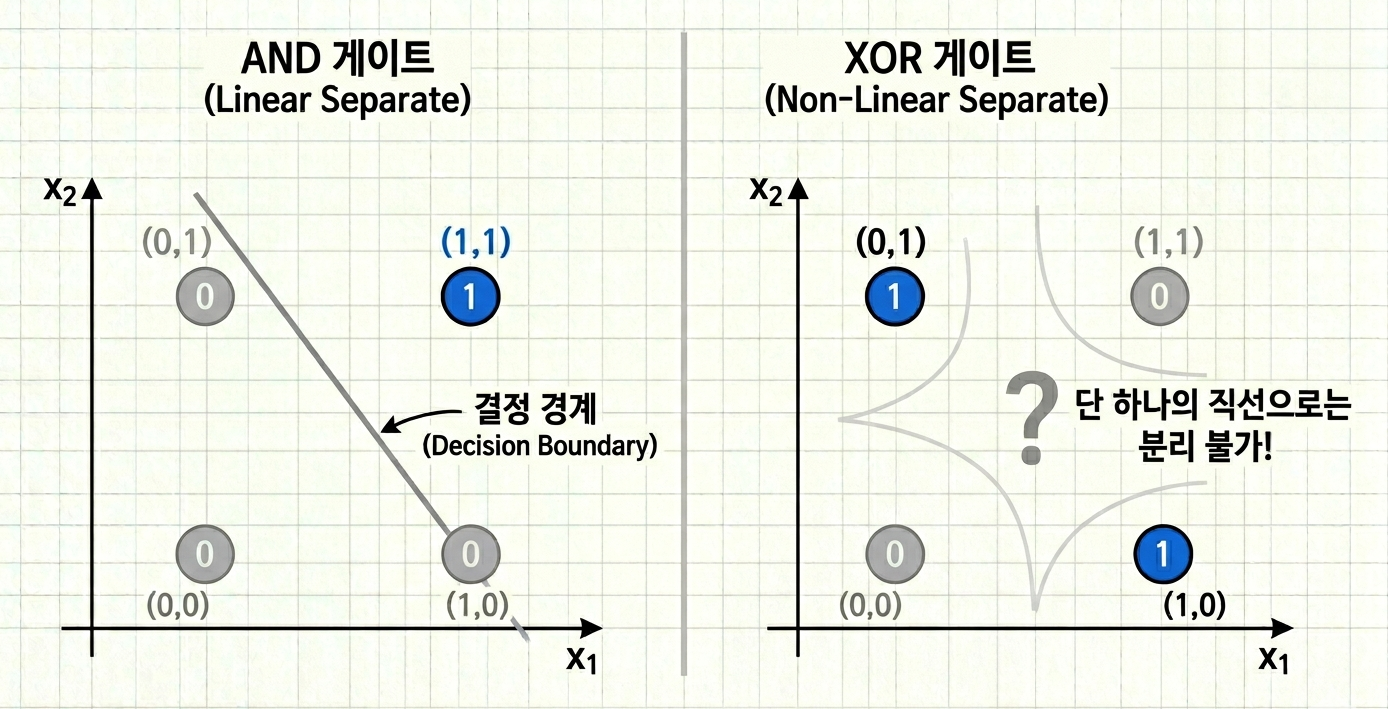

Let's test if the Perceptron can handle basic "Logic Gates."

The Prelude of Hope: Learning AND/OR Gates Easily First, let's teach it the AND operation, where the output is 1 only if both inputs are 1.

[0, 0] = 0

[1, 0] = 0

[0, 1] = 0

[1, 1] = 1 (둘 다 1일 때만!)

The Perceptron easily found a line to isolate $(1,1)$. It succeeded with the OR gate just as easily. Academia was thrilled, hoping that connecting thousands of these "cells" would create human-level intelligence.

Sudden Despair: The Unsolvable "XOR" Riddle That hope was shattered in 1969 when AI pioneer Marvin Minsky raised the XOR problem. XOR (Exclusive OR) outputs 1 only when the inputs are different.

(0, 0) = 0

(0, 1) = 1

(1, 0) = 1

(1, 1) = 0

Minsky pointed out:

"Try separating 0 and 1 with a single straight line on a graph."

It is impossible. To isolate the 1s, you need either a curve or two lines.

The Arrival of the First "AI Winter" Because the Perceptron was limited to Linear Separation, it couldn't solve this simple logic problem. This critique led to a cutoff in government funding and public interest, halting AI structural development for over a decade.

5. Wrapping Up

The Perceptron, our "AI ancestor," fell at the small hurdle of XOR because it could only think in straight lines. This led to the "AI Winter." However, some didn't give up. They thought: "If one line doesn't work, why not use two? Or stack them in layers?"

This "simple" idea became the origin of the massive neural networks behind ChatGPT and self-driving cars. Next time, we will explore the Multi-Layer Perceptron (MLP) and the Backpropagation algorithm—the "whip" that tells the network, "You're wrong, try again!"—which elegantly solved the XOR problem.

Key Keyword Checklist

MCP Neuron: The first model to mathematically mimic biological brain cells.

Weight & Bias: Key variables that determine input importance and adjust the decision boundary.

Threshold: The "gatekeeper" deciding whether to fire a signal.

Linear Separation: The Perceptron’s great strength and its fatal limitation.

XOR Problem: The "unsolvable riddle" that triggered the first AI Winter.